Welcome to the forefront of conversational AI as we explore the fascinating world of AI chatbots in our dedicated blog series. Discover the latest advancements, applications, and strategies that propel the evolution of chatbot technology. From enhancing customer interactions to streamlining business processes, these articles delve into the innovative ways artificial intelligence is shaping the landscape of automated conversational agents. Whether you’re a business owner, developer, or simply intrigued by the future of interactive technology, join us on this journey to unravel the transformative power and endless possibilities of AI chatbots.

Guide

Gemma is the Product Manager for Fraud and Anti-Money Laundering (FRAML) Analytics at Experian. With a robust background in mathematics and experience as an analyst, she possesses deep technical expertise in integrating machine learning for insights and modelling in fraud and financial crime.

Artificial Intelligence (AI) and Machine Learning (ML) mark a new era in fraud detection, empowering algorithms to be both proactive and predictive, spotting patterns and potential fraudulent activity before it happens.

However, as financial transactions embrace digital advancements, so do the tactics used by fraudsters trying to steal people’s information, identities, and money.

It’s crucial for organisations to continually refine their approaches and stay on top of the latest fraud trends and predictions to be able to detect and prevent fraud, as the same AI capabilities used for good can be manipulated for more sophisticated fraudulent activities, for example, the rise in generative AI fraud.

AI fraud detection is the use of machine learning techniques to identify and prevent fraudulent activities by analysing large datasets and recognising patterns that indicate potential fraud. AI models learn from trends and can highlight suspicious attributes or relationships that may not be visible to a human analyst, but indicate a larger pattern of fraud.

Machine learning has become an invaluable tool in the fight against fraud, helping companies move from reactive to proactive by highlighting suspicious attributes or relationships that may be invisible to the naked eye but indicate a larger pattern of fraud.

Gemma Martin

When addressing the benefits of using machines to detect fraudulent activity, XYZ said: “The great value of machine learning is the sheer volume of data you can analyse, but selecting the correct data and approach is critical. Supervised learning, which incorporates prior knowledge of fraud tactics to guide pattern identification because it’s easy to teach the machine once there’s a clear target for it to learn.”

Unsupervised machine learning techniques can be used when guiding data for outcomes isn’t available. These anomaly detection models help pick up on unknown trends or aberrations in transactions, and predict smaller ‘pockets’ of information.

By combining the two, the system can recognise previous patterns of confirmed fraud, and raise the alert if a pattern of activity changes, increasing fraud detection rates and reducing false positives.

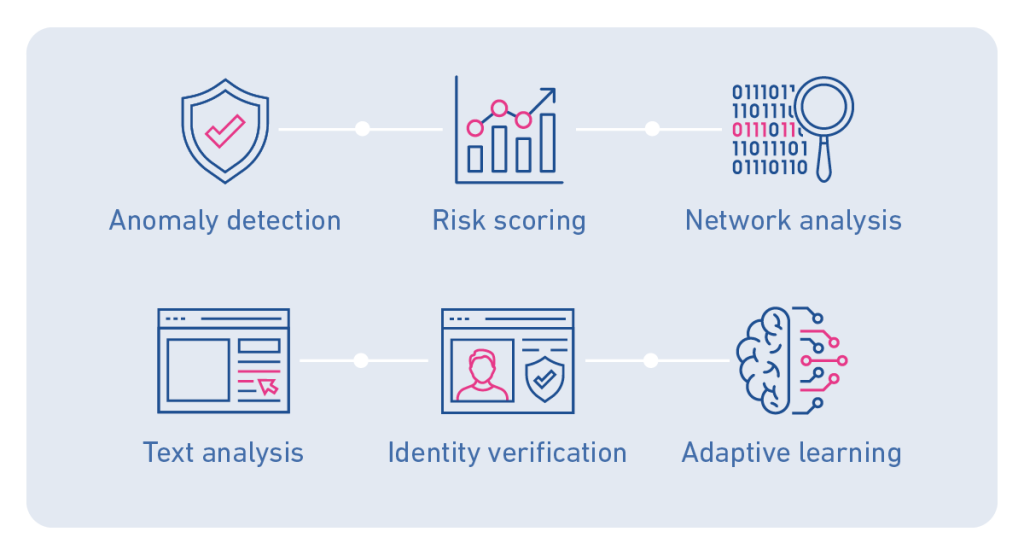

Some common applications of AI and machine learning in fraud prevention include:

Machine-learning algorithms identify unusual patterns or deviations from normal behaviour in transactional data. By training on historical data, the algorithms recognise legitimate transactions and flag suspicious activities indicating potential fraud.

Machine-learning models assign risk scores to transactions or user accounts based on various factors, such as transaction amount, location, frequency, and past behaviour. Higher risk scores help prioritise resources and focus on specific transactions or accounts that warrant further investigation.

Machine-learning techniques, like graph analysis, uncover fraudulent networks by analysing relationships between entities and identifying unusual connections or clusters.

Algorithms analyse unstructured text data like social media posts and emails to identify patterns or keywords indicating fraud or scams.

Machine-learning models verify user-provided information, such as identification documents or facial recognition data, preventing identity theft.

Machine-learning adapts to new information, allowing models to stay up-to-date and detect emerging fraud patterns as tactics evolve.

Generative AI, also known as Generative Adversarial Networks (GANs), has revolutionised various industries with its ability to create realistic and plausible data from someone inputting a few simple commands into a computer. However, the same technology that brings innovation and creativity also poses a significant threat. Some fraud challenges of Generative AI include:

The more Generative AI advances, the bigger the threat it poses. New techniques and applications are constantly emerging, requiring continuous efforts from cybersecurity experts to stay ahead of potential risks.

People, businesses, and policymakers must stay informed about the latest trends and capabilities, and develop robust strategies to counteract the associated risks.

Just as Gen AI can predict the next word in a sentence – whether that be in texts, in emails, or when you’re inputting your search query online – machine learning models can predict the likelihood of someone being a fraudster. Each involves making predictions based on patterns and associations in data.

By employing innovative fraud detection techniques, AI is pivotal in safeguarding industries against malicious activity and financial loss. But what does that safeguarding look like?

AI continually learns and adapts to new fraudulent tactics, offering a proactive and dynamic approach to safeguarding various industries helping them to keep their finances and data safe, maintaining that all-important trust between company and customer.

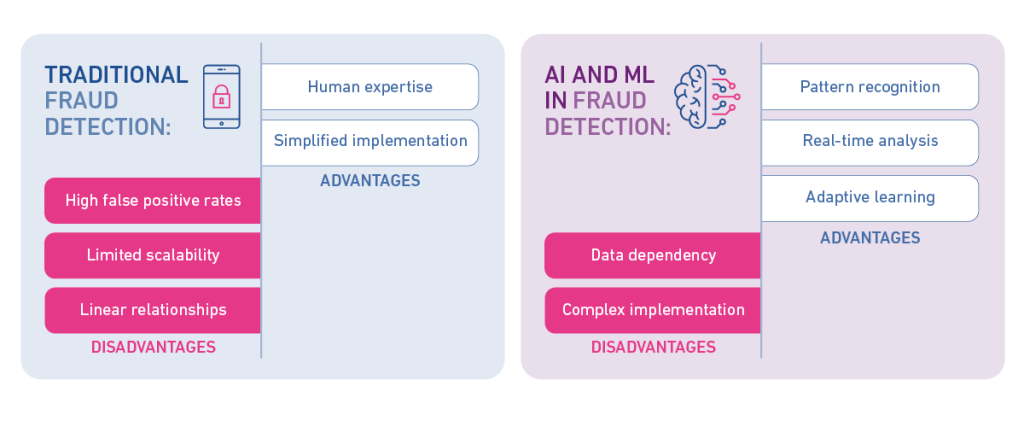

While AI and machine learning have introduced a paradigm shift in fraud detection capabilities, traditional methods still rely on rule-based systems and human intervention. But how do the two methods compare?

Teams dedicated to fraud management across various industries, from banking and financial services, to retail and healthcare, confront escalating risk levels amid a convergence of threats. Our UK Fraud Index for the first quarter of 2022 indicated a surge in fraud, particularly in areas such as cards, asset finance, and loans, which saw the highest rate in three years.

Our 2022 UK&I Identity and Fraud Report underscores a 22% increase in fraud losses in the UK since 2021, with 90% of cases originating online. The report states, “AI and Machine Learning are now key technologies for online customer identification, authentication, and fraud prevention”.

While the exposure to these risks varies, larger, more sophisticated organisations may already possess the internal expertise, technological resources, and data access required to mitigate fraud risks. Younger and mid-market entities, including start-ups and scale-ups, may have less protection, rendering them more susceptible to risk.

It’s no longer enough to be reactive, teams need to be proactive and – where possible – predictive. This is where AI and ML can and are helping businesses stay ahead of the curve.

AI and ML offer opportunities in fraud detection but also come with their own set of challenges. However, the dynamic nature of both typically offers solutions to all common challenges that arise.

Hallucination: addressing fabricated responses in AI models

Hallucination, where AI systems generate fictitious responses, poses a significant challenge for fraud detection in Artificial Intelligence and Machine Learning. Efforts are underway to mitigate this risk, with various techniques being explored to restrict language models from conjuring up inaccurate information.

Notably, during the pre-SAML and Open EO conferences, a commitment was made to support companies facing legal challenges related to copyright infringement. This financial assistance aims to aid organisations entangled in legal battles arising from deploying AI and ML models, acknowledging the risks and responsibilities associated with these technologies.

Bias and fairness: crucial considerations in AI and ML development

Fairness is paramount for developing and deploying effective AI and ML systems. Biases in data analysis can provide incorrect conclusions. It’s key that AI and ML frameworks do not discriminate based on factors such as sex, race, religion, disability, or colour.

Striking a balance between fostering innovation and maintaining fairness is essential. While regulations are necessary, they should not impede companies from securely conducting research. Failure to strike this balance could hinder the UK’s position in developing a globally leading AI sector.

Governance and data privacy: navigating the landscape of AI growth

The unprecedented volume of data available propels the growth of AI and machine learning. However, this surge in data raises concerns about governance and privacy. As the industry continues to harness data for AI advancements, robust governance frameworks are crucial to ensure ethical and responsible use.

As we continue to unravel the complexities of financial security, it’s important to stay informed and vigilant.

To learn more about fraud prevention tools and deepen your understanding of the innovative technologies shaping the future of security, you can find a selection of handy guides on our website or speak with an expert today.

©2025 Experian Information Solutions, Inc. All rights reserved.

Experian Ltd is authorised and regulated by the Financial Conduct Authority. Experian Ltd is registered in England and Wales under company registration number 653331. Registered office address: The Sir John Peace Building, Experian Way, NG2 Business Park, Nottingham NG80 1ZZ.